|

I am in the Central Applied Science group at Meta (formerly known as Facebook). I build statistics and optimization tools, develop new ways of analyzing data, and run large online experiments. Prior to this I finished my PhD in operations research at MIT. I can be contacted at bletham@meta.com. |

|

News from the last year: June 2023: When asking humans about preferences or perception, you can also measure response time. We have submitted a paper about how to incorporate this information into a nonparametric response surface model, now on arxiv! (arxiv) April 2023: We wrote a paper on using regularization to find sparse solutions in Bayesian optimization, now published at AISTATS! (paper) March 2023: Nonparametric response surface modeling was part of a platform for understanding hardware requirements for distortion-free rendering in AR and VR. The rest of the platform is very impressive. (arxiv) February 2023: I made the list of "top reviewers" for AISTATS here. I guess I should ask for a raise next year! February 2023: We have a paper published at AAAI on combining Gaussian process models with parametric models from psychology, a great way to combine domain knowledge with flexible nonparametric modeling. (paper) December 2022: We had a paper about personalized experimentation at the NeurIPS Workshop on Causal Inference Challenges in Sequential Decision Making. (arxiv) |

Bayesian optimization and experimentation

Bayesian optimization with online field experiments

At Facebook we have many backend systems with parameters that could presumably be optimized to improve system performance. However, often the only way to measure performance is through time consuming, noisy field experiments. In this paper we describe methodological advances for using Bayesian optimization to design a set of experiments that efficiently search the parameter space. The paper also describes two systems optimizations that we've done with this approach: tuning a production ranking system, and optimizing HHVM compiler flags.

Benjamin Letham, Brian Karrer, Guilherme Ottoni, Eytan Bakshy (2019) Constrained Bayesian optimization with noisy experiments. Bayesian Analysis 14(2): 495-519. (pdf) (supplement) (erratum) (blog post)

Ranking systems can be naively simulated offline by replaying sessions to a simulator that produces the ranked list, and then applying existing event prediction models to predict online outcomes. This approach provides a high-throughput way of testing new value model policies, but the estimates suffer from off-policy bias and related issues and end up being too inaccurate to be directly useful. In this paper we show that these biased offline estimates can be combined with online A/B tests through multi-task Bayesian optimization. Including the simulator in the optimization provides significant gains, and we study the empirical generalization behavior. We used this approach to successfully improve the News Feed ranking model.

Benjamin Letham, Eytan Bakshy (2019) Bayesian optimization for policy search via online-offline experimentation. Journal of Machine Learning Research 20(145): 1-30. (pdf) (supplement) (blog post)

Our software for doing Bayesian Optimization is built on PyTorch. It is open sourced as a pair of packages (Ax and BoTorch), and this paper describes the framework and concepts behind using differentiable programming for Bayesian optimization.

Maximilian Balandat, Brian Karrer, Daniel R. Jiang, Samuel Daulton, Benjamin Letham, Andrew Gordon Wilson, Eytan Bakshy (2020) BoTorch: a framework for efficient Monte-Carlo Bayesian optimization. In: Advances in Neural Information Processing Systems 33, NeurIPS '20. (proceedings)

High-dimensional Bayesian optimization

Typical approaches for Bayesian optimization perform poorly in high dimensions. One approach for doing Bayesian optimization in high dimensions is to do the optimization in a low-dimensional embedding, but this approach can perform badly even on problems that meet the underlying assumptions. We studied the failure points for linear embedding methods, and developed techniques for improving performance and reliability of Bayesian optimization in a linear embedding.

Benjamin Letham, Roberto Calandra, Akshara Rai, Eytan Bakshy (2020) Re-examining linear embeddings for high-dimensional Bayesian optimization. In: Advances in Neural Information Processing Systems 33, NeurIPS '20. (proceedings) (video)

One common setting that produces high-dimensional optimization problems is tuning contextual policies where some number of parameters are personalized for each of several contexts. When outcomes can only be measured at some high-level aggregate (as in an A/B testing setting), the entire policy must be tuned jointly, which produces a high-dimensional problem. However, this problem has particular structure that we can take advantage of to construct new models that we show are able to find good contextual policies.

Qing Feng, Benjamin Letham, Hongzi Mao, Eytan Bakshy (2020) High-dimensional contextual policy search with unknown context rewards using Bayesian optimization. In: Advances in Neural Information Processing Systems 33, NeurIPS '20. (proceedings)

In real systems, interpretability is often very important, especially in high dimensions. We show how regularization and ideas from multi-objective optimization can be used to find sparse solutions that lend themselves to interpretability.

Sulin Liu, Qing Feng, David Eriksson, Benjamin Letham, Eytan Bakshy (2023) Sparse Bayesian optimization. In: Proceedings of the 26th International Conference on Artificial Intelligence and Statistics, AISTATS '23, pages 3754-3774. (pdf) (code)

Hyperparameter optimization

Hyperparameter optimization of machine learning models is a popular application of Bayesian optimization. In a machine learning platform we may have many similar models that are being optimized, and we may want to use the results of these optimizations to warm-start others. Here we developed a method for doing this, and used it for hyperparameter optimization in Facebook's computer vision platform.

Matthias Feurer, Benjamin Letham, Eytan Bakshy (2018) Scalable meta-learning for Bayesian optimization. In: ICML AutoML Workshop. (arxiv)

A significantly expanded and improved version of this paper is now on arxiv!

Matthias Feurer, Benjamin Letham, Frank Hutter, Eytan Bakshy (2022) Practical transfer learning for Bayesian optimization. Preprint. (arxiv)

Psychophysics

A common problem in psychophysics is identifying perceptual thresholds, the lowest stimulus intensity at which the stimulus is perceivable, often with respect to several other context parameters (like stimulus frequency, shape, etc.). Surrogate modeling with a GP and active learning of a similar flavor to Bayesian optimization can be highly effective for exploring these high-dimensional perception response surfaces and globally identifying thresholds. We've done work on making this easy to do, via an open-source toolbox AEPSych and an associated paper.

Lucy Owen, Jonathan Browder, Benjamin Letham, Gideon Stocek, Chase Tymms, Michael Shvartsman (2021) Adaptive nonparametric psychophysics. Preprint. (github, arxiv)

Identifying perceptual thresholds is a level-set estimation (LSE) problem. The best acquisition functions for LSE, look-ahead acquisition, relied on update formulae that were no longer analytical when working with Bernoulli observations, which are common in psychophysics. We developed a new class of look-ahead acquisition functions for Bernoulli observations that significantly improved active learning performance.

Benjamin Letham, Phillip Guan, Chase Tymms, Eytan Bakshy, Michael Shvartsman (2022) Look-ahead acquisition functions for Bernoulli level set estimation. In: Proceedings of the 25th International Conference on Artificial Intelligence and Statistics, AISTATS '22, pages 8493-8513. (pdf) (code) (video)

There is a large and old literature in psychology on measuring perceptual thresholds. We can combine parametric models from psychology with nonparametric GPs to get the best of both worlds.

Stephen Keeley, Benjamin Letham, Craig Sanders, Chase Tymms, Michael Shvartsman (2023) A semi-parametric model for decision making in high-dimensional sensory discrimination tasks. In: Proceedings of the 37th AAAI Conference on Artificial Intelligence, AAAI '23, pages 40-47. (pdf)

When asking humans their preferences or perception, we can measure reaction times alongside their response. This can be helpful for improving response surface models in parts of the parameter space where the human is uncertain about their preference/perception, in which case they often take longer to respond.

Michael Shvartsman, Benjamin Letham, Stephen Keeley (2023) Response time improves choice prediction and function estimation for Gaussian process models of perception and preferences. Submitted. (arxiv)

One of the applications of these psychophysics models is setting hardware requirements for AR and VR. This paper describes a platform for doing that, in which nonparametric modeling played a role.

Phillip Guan, Eric Penner, Joel Hegland, Benjamin Letham, Douglas Lanman (2023) Perceptual requirements for world-locked rendering in AR and VR. Submitted. (arxiv)

Forecasting

The Prophet forecasting package

Forecasting is a common data science task, yet also a specialized skill outside the expertise of many data scientists. The Prophet forecasting package is designed to be flexible enough to handle a range of business time series, while still being configurable by non-experts. We developed it for a collection of important forecasting tasks at Facebook, and have since open sourced it. It is built on Stan and has R and Python versions. It has received a tremendous response, filling a real need for forecasting these types of time series. There is code, a site with documentation, and a paper.

Sean J. Taylor, Benjamin Letham (2018) Forecasting at scale. The American Statistician 72(1): 37-45. (pdf) (code) (site)

Nonlinear dynamics and computational biology

Prediction uncertainty and optimal experimental design

During the work below on modeling the immune response to HIV infection, we ran into two questions for which we couldn't find a satisfactory answer. The first was to determine the extent to which the collected data constrained the model predictions (prediction uncertainty). The second was to figure out what additional experiments would be most helpful for reducing that uncertainty (optimal experimental design). While eating a hamburger one weekend, I had an idea for how both of these questions might be answered by a particular optimization problem. After hashing out the details, running some experiments, and doing some theoretical work, we wrote it up in this paper.

Benjamin Letham, Portia A. Letham, Cynthia Rudin, Edward P. Browne (2016) Prediction uncertainty and optimal experimental design for learning dynamical systems. Chaos 26: 063110. (pdf) (erratum)

The immune response to HIV infection

I provided computational and statistical expertise for this study of the dynamics of HIV inhibition by interferon.

Edward P. Browne, Benjamin Letham, Cynthia Rudin (2016) A computational model of inhibition of HIV-1 by interferon-alpha. PLoS ONE 11(3): e0152316. (pdf) (PLOS)

Learning from sales transaction data

Estimating arrivals and demand in the presence of stockouts

Suppose we wish to use sales transaction data to estimate demand for a collection of substitutable products. When an item goes out of stock, these data no longer reflect the original demand, since some customers leave with no purchase while others substitute alternative products for the one that was out of stock. We developed a Bayesian hierarchical model for simultaneously estimating the customer arrival rate and primary demand, which we use on data from a local bakery.

Benjamin Letham, Lydia M. Letham, and Cynthia Rudin (2016) Bayesian inference of arrival rate and substitution behavior from sales transaction data with stockouts. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD '16. (pdf) (video)

Bundle pricing from retail transaction data

Bundle offers are often a low-risk way for retailers to increase their profits, if they are priced correctly. We show how historical sales data for the individual items in the bundle can be leveraged to predict how profits will be affected by introducing a bundle at a particular price. We use a copula model that considers the correlations in item demands, and requires far less stringent assumptions than past work in bundling. This work was done during my summer internship at IBM Research.

Benjamin Letham, Wei Sun, and Anshul Sheopuri (2014) Latent variable copula inference for bundle pricing from retail transaction data. In: Proceedings of the 31st International Conference on Machine Learning, ICML '14, pages 217-225. (pdf) (errata)

Predictions from rules

Learning a decision list to predict stroke risk

A decision list is a particular way of organizing association rules to form a classifier which can be both powerful and interpretable. We developed a Bayesian approach for fitting decision lists and used it to create a simple system for predicting stroke risk in atrial fibrillation patients (a la CHADS2).

Benjamin Letham, Cynthia Rudin, Tyler H. McCormick, and David Madigan (2015) Interpretable classifiers using rules and Bayesian analysis: building a better stroke prediction model. Annals of Applied Statistics 9(3): 1350-1371. (pdf) (Python code)

Sequential event prediction

Here we discuss using techniques from supervised ranking to make predictions on data that are sequentially revealed, using a model that is a linear combination of rules. It makes predictions using only the set of past events, not their order, and is demonstrated with email recipient recommendation, medical symptom prediction, and an e-commerce recommender system.

Benjamin Letham, Cynthia Rudin, and David Madigan (2013) Sequential event prediction. Machine Learning 93: 357-380. (pdf)

Association rules, collaborative filtering, and recommender systems

The ECML PKDD Discovery Challenge 2013 was to develop a recommender system for recommending given names to parents looking for the perfect baby name at nameling.net (a now defunct site). For the competition, I developed a recommender system based on association rules, combined with ideas from user-based collaborative filtering. Despite its simplicity and a lack of feature engineering, I won 3rd place for the offline challenge. A major advantage of my approach was that it was fast enough that recommendations could be made entirely online, and I won 2nd place in an online challenge, where my recommender system was actually implemented into the nameling website.

Benjamin Letham (2013) Similarity-weighted association rules for a name recommender system. In: Proceedings of the ECML PKDD 2013 Discovery Challenge Workshop. (pdf)

Learning theory and association rules

We developed a learning theory framework for using association rules for classification and sequential event prediction. We use both VC bounds and results from algorithmic stability. The work was first published at COLT, and then an extended version at JMLR.

Cynthia Rudin, Benjamin Letham, Ansaf Salleb-Aouissi, Eugene Kogan and David Madigan (2011) Sequential event prediction with association rules. In: Proceedings of the 24th Annual Conference on Learning Theory, COLT '11, pages 615-634. (pdf)

Cynthia Rudin, Benjamin Letham, David Madigan (2013) Learning theory analysis for association rules and sequential event prediction. Journal of Machine Learning Research 14: 3385-3436. (pdf)

Information retrieval

Growing a list using the Internet

There are many situations in which important information is scattered across the Internet in many incomplete lists. Given a few seed examples of what we're interested in, can we find and compile all of these incomplete lists of related items to grow a complete list in an unsupervised way? We developed an algorithm for doing this and which works well enough to be useful on a wide range of list growing problems. This work was presented at ECML PKDD 2013.

Benjamin Letham, Cynthia Rudin, and Katherine Heller (2013) Growing a list. Data Mining and Knowledge Discovery 27: 372-395. (pdf) (supplement)

This project received some attention in the media, including a segment about the project on 89.7 WGBH Boston Public Radio. A recording of the live interview is here.

Neuroscience

Cross-sensory interactions

While working at The Martinos Center for Biomedical Imaging at Mass. General Hospital I was involved in a study of cross-sensory interactions (auditory stimuli activate visual cortex and vice versa) using MEG and fMRI.

Tommi Raij, Jyrki Ahveninen, Fa-Hsuan Lin, Thomas Witzel, Iiro P. Jaaskelainen, Benjamin Letham, Emily Israeli, Cherif Sahyoun, Christos Vasios, Steven Stufflebeam, Matti Hamalainen (2010) Onset timing of cross-sensory activations and multisensory interactions in auditory and visual sensory cortices. European Journal of Neuroscience, 31(10): 1772-1782. (pdf)

The difficulties of measuring the timing of weak cross-sensory interactions led to this work:

Benjamin Letham and Tommi Raij (2011) Statistically robust measurement of evoked response onset latencies. Journal of Neuroscience Methods, 194(2): 374-379. (pdf)

Machine learning-themed videos

|

AISTATS paper reaction | Mega Compilation!!Paper reaction videos are the latest trend sweeping AI and ML. Efforts are underway to entirely replace the peer review process with the number of likes and subscribes on reaction videos. This video is a compilation of just a few of the reaction videos for a recent AISTATS paper. A lot of excitement! It's gonna be great! |

|

If AI papers were pharmaceuticals (a parody commercial)Do you suffer from high-dimensional Bayesian optimization? Alebo can help! Watch this commercial to understand how Alebo can make your life happier, your food tastier, and your friends funnier. |

|

Stockouts and substitutions at Lando's BakeryWhen KDD required me to make a video describing our paper on stockouts and substitutions at a bakery, I decided it was the perfect opportunity to try making a stop motion video, something I had always wanted to do. It was my first but not my last, and it was every bit as fun as I imagined it would be. Perhaps not quite what the conference organizers had in mind, but I think it got the ideas across pretty well! |

Data science posts

|

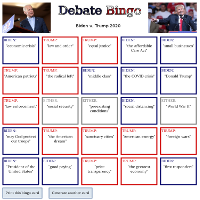

Debate bingo: 2020 presidential editionThe second edition of Debate Bingo! I once again extracted commonly used phrases from each candidate to create a bingo card generator, for use during the debates. |

|

In Defense of FahrenheitOn a scale of 0 to 100, how warm is it? I combine Census data with daily temperatures from nearly 4000 weather stations to estimate the distribution of temperatures experienced by people in the U.S. The oft-maligned Fahrenheit scale nearly perfectly captures this distribution and is a great temperature scale for U.S. weather. |

|

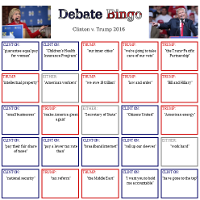

Debate bingo: 2016 presidential editionPresidential candidates tend to repeat themselves, and our two for 2016 are no exception. I extracted some of their most commonly used phrases and used them to create a bingo card generator, for use during the debates. |

|

The best part of a lightsaber duel is the talkingWhy are the original Star Wars lightsaber duels so much better than those from the Prequels? It's not the simple fight choreography, it's the talking. In this post I quantify the balance between talking and fighting in Star Wars lightsaber duels and show that there is a clear difference between the Original Trilogy and the Prequels. Unfortunately, The Force Awakens is a typical Prequels duel. |

|

Was 2015 Boston's worst winter yet?When I finally had some time after spending the entire month of February shoveling snow, I did an analysis of snowfall data to determine exactly how unusual of a winter it was. Although 2015 beat the all-time total snowfall record by only a couple inches, a deeper look at the data revealed that this was by far the worst winter in recorded history. |

Technical expositions

On NP-completeness

The theory of NP-completeness has its roots in a foundational result by Cook, who showed that Boolean satisfiability (SAT) is NP-complete and thus unlikely to admit an efficient solution. In this short paper I prove an analogous result using binary integer programming in the place of SAT. The proof is notationally cleaner and more straightforward to those for whom the language of integer programming is more natural than that of SAT.

Benjamin Letham (2011) An integer programming proof of Cook's theorem of NP-completeness. (pdf)

On Bayesian Analysis

In this work I provide a somewhat rigorous yet simultaneously informal introduction to Bayesian analysis. It covers everything from the theory of posterior asymptotics to practical considerations of MCMC sampling. It assumes the reader is comfortable with basic probability and some mathematical rigor.

Benjamin Letham (2012) An overview of Bayesian analysis. (pdf)

About me

When I'm not working on research, I enjoy sailing, traveling, playing the violin, and programming. I am also a developer and maintainer of the Prophet time series forecasting package for R and Python.My Erdös number is 4.